I run an Amazon + Shopify + TikTok Shop e-commerce business. I use AI every single day in operations, finance and marketing - not just regular chatbots, but agentic AI that takes over my browser, completes multi-step tasks autonomously, and does in minutes what used to take hours. I'm watching my entire industry go agentic in real time.

That daily experience is what led me to one of the most convicted positions in my portfolio right now: the AI memory supercycle.

Here's my thesis in plain terms:

The bottleneck has shifted

Since 2022, everyone is focused on GPUs. Nvidia became a household name and money was made. But the constraint has moved. You can build all the GPUs you want, but if you can't feed them data fast enough, they sit idle. High-Bandwidth Memory is what feeds them. Right now, hyperscalers are paying whatever it takes to get it, and the three companies that make it - Samsung, SK Hynix, and Micron - are sold out.

This isn't a normal supply crunch. New fabs take 3-5 years to build. There is no quick fix.

China can't solve it either

A lot of people assume China will flood the market and kill pricing. They won't - at least not in any timeframe that matters for this trade. U.S. export controls block China from buying advanced HBM from Western suppliers. And their domestic alternative, CXMT, is roughly 6-8 years behind on the technology. They're producing samples, not volume. The pricing power stays with the 3 big players through at least 2027.

The demand curve is steeper than anyone models

Here's what most analysts miss: agentic AI uses dramatically more memory than a simple chatbot query. When AI is completing 40-step browser tasks, maintaining context across an entire session, and running continuously - the memory requirement per user isn't 2x a chatbot. It's closer to 10-50x. And right now, only early adopters like ecommerce and marketing are running agents. When traditional industries - healthcare, logistics, manufacturing, legal start deploying agentic at scale, the demand will not math anymore.

Efficiency gains won't save the bears

The standard pushback is: "AI is getting more efficient, so memory demand will flatten." I'd argue the opposite. Every time AI gets cheaper and more efficient, more people will jump on it for more things. This is Jevons Paradox, and it's already playing out. DeepSeek took the lead, locked in and made AI cheaper. It didn't reduce GPU or memory orders. It just accelerated them.

What this newsletter is about

I'm not an analyst. I don't manage a fund. I'm an entrepreneur operator with a thesis, skin in the game, and a habit of doing the work before I put money somewhere.

Over the coming weeks I'll go deep on each pillar of this thesis, the HBM bottleneck, the China situation, the agentic memory math, the coming adoption wave, and yes, what would actually change my mind. Because any thesis worth holding is worth stress-testing publicly.

If you're tracking the same trade, or think I'm wrong, I want to hear from you.

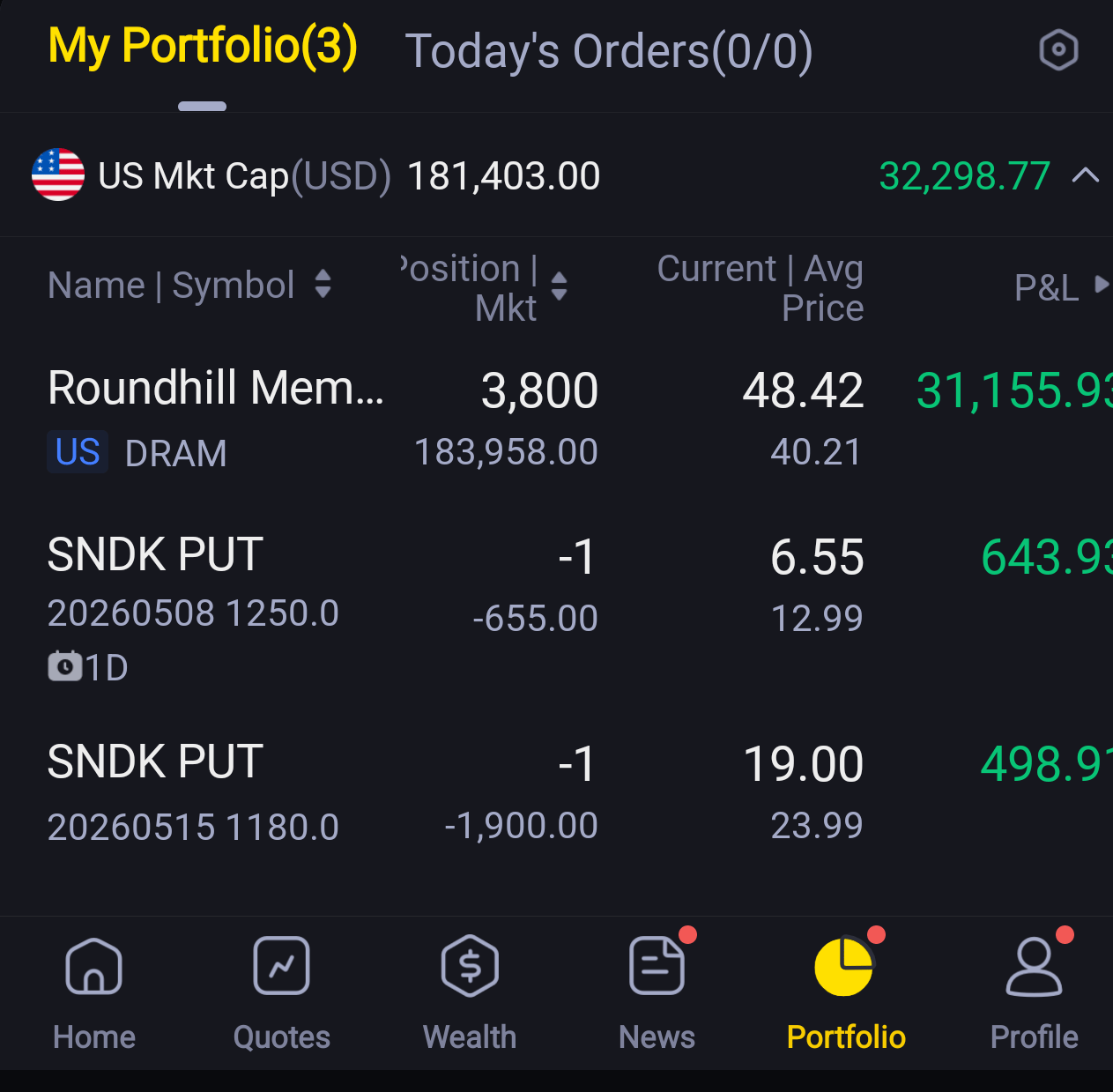

Not investment advice. I hold a position in DRAM ETF (ticker: DRAM). Do your own research.

My current positions as of May 7, 2026. Yes, those are short puts on SNDK. Yes, I'm still bullish. That's a story for the next post