I run an e-commerce business. I use an agentic AI called Anygen, ByteDance's browser agent, every single day.

Here's what that actually looks like in practice.

Anygen takes over my browser, opens my Google Ads account, pulls performance reports across every campaign, analyzes what's working and what isn't, gives me a prioritized list of optimizations, and then executes them directly inside Google Ads without me touching a single button. It scans my Shopify store for SEO gaps and content improvements, flags them, and fixes them. It writes and deploys front-end code for visual changes to my store theme.

These aren't tasks I didn't know how to do. I know how to run Google Ads. I know basic Shopify development. The problem was always time. Some of these tasks I simply wouldn't attempt before, not because they were beyond me, but because the time cost made them economically irrational. A three-hour manual audit of ad performance across dozens of campaigns, followed by executing fifty individual optimizations? That's a full day's work. I don't have a full day for one task. My job is to grow revenue, not wasting time on busy work.

Now I queue it up, walk away, and come back to a completed job.

That shift, from "I won't do this because it costs too much time" to "I'll let it run in the background while I do something else", is not a productivity improvement. It's a category change. Tasks that didn't exist in my workflow now exist. Work that was economically impossible is now routine.

And I'm barely scratching the surface of what's coming.

The Memory Math Nobody Is Mathing

Here's where most AI demand forecasts go wrong.

They model AI as a query. User types something, model responds, session ends. Memory demand is brief, intense, and finite. The inference happens, the context clears, the next user starts fresh.

Agentic AI doesn't work like that.

When Anygen runs a complex Google Ads optimization, it isn't making one inference call. It's making dozens, sometimes hundreds, of sequential decisions across an extended session. At each step it takes a screenshot of the current browser state, processes what it sees, decides what to do next, executes an action, and repeats. The entire history of the session, every screenshot, every action taken, every result observed — gets carried forward in context. It’s literally a human brain.

By step 40 of a complex task, the model is processing an enormous context window. Vision inputs, action history, intermediate results, original instructions, error states from things that went wrong and had to be corrected. The memory bandwidth required per session isn't 2x a chatbot query. Depending on task complexity and session length, it's closer to 10 to 50 times more.

Now multiply that across every present and future businesses that deploys what I'm using.

The Adoption Curve That Isn't In Any Analyst Model

I'm in several high-level e-commerce masterminds and operator groups. The people in those rooms aren't just using browser agents like I am. They're building with Claude Code. They're constructing complex, multi-agent workflows that make my Anygen usage look elementary. Full automated pipelines, competitor monitoring, inventory management, customer service, ad creative generation and testing, all running simultaneously, all context-heavy, all memory-intensive.

And this is still early adopters. E-commerce operators, marketers, developers, tech-forward businesses. The people who move fast and break things.

The logistics company that ships products I sell hasn't started yet. The manufacturer I source from hasn't started yet. The accountant who files my taxes hasn't started yet. The lawyer who reviews my contracts hasn't started yet.

When those industries start deploying agentic AI, and they will, because the economics are too compelling to ignore, they won't be just running chatbots. They'll be running agents. Long-context, multi-step, browser-controlling, hours-long agents that consume 10 to 50 times the memory bandwidth per session of anything that existed three years ago.

That demand isn't in anybody's HBM forecast. It hasn't started yet. It's the wave behind the wave.

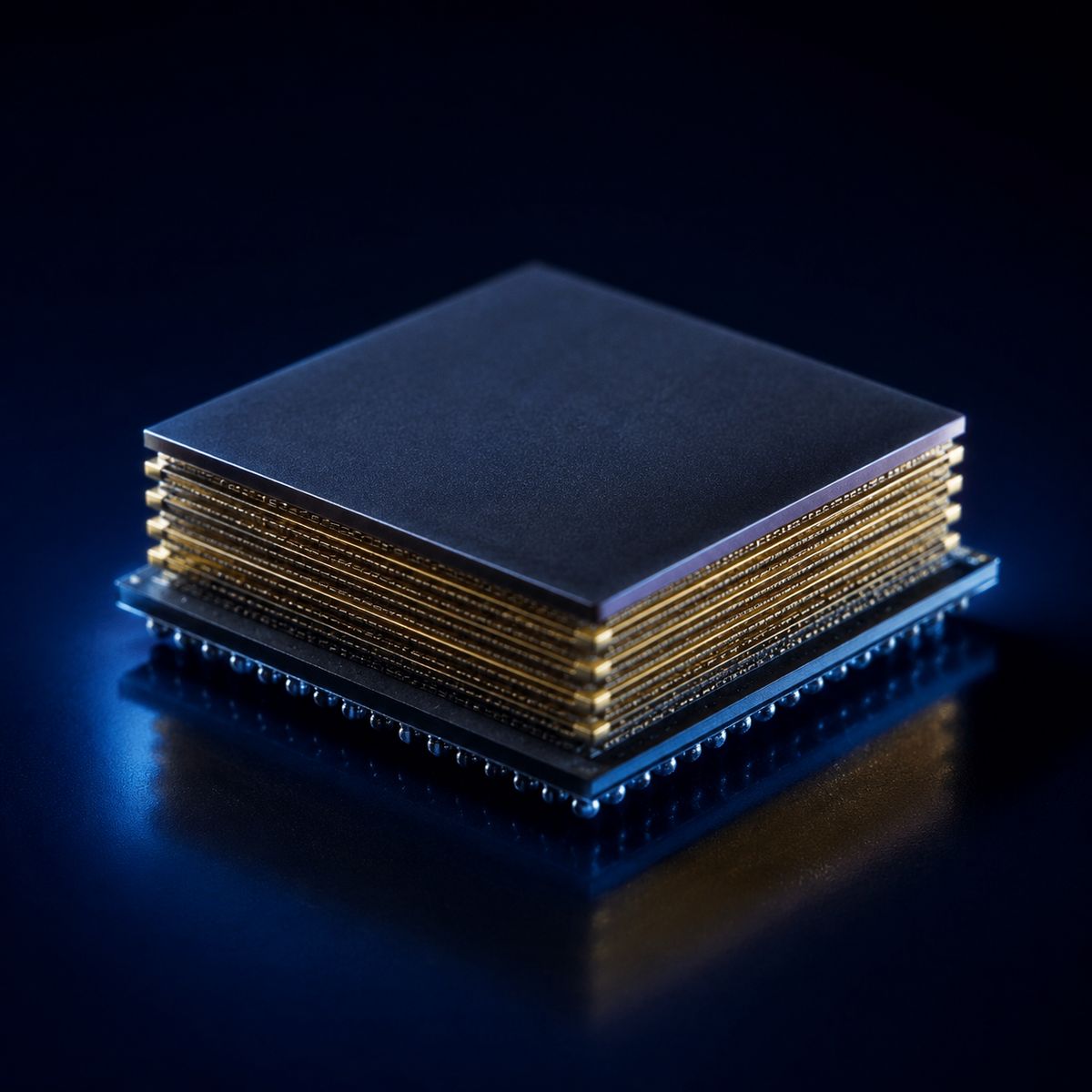

Why This Changes The Supply Math

Hyperscalers aren't just training models anymore. They're running millions of simultaneous agent sessions. Each session needs persistent memory bandwidth throughout its entire duration, not a brief spike during inference, but sustained high-bandwidth access across dozens or hundreds of sequential steps.

SK Hynix is already sold out. Big Tech is offering to fund their factories and getting turned away. The supply constraint that exists today was built around chatbot-era demand assumptions.

Agentic AI is a multiplier on top of a supply chain that was already at zero capacity.

The Task That Changes Everything

There's a specific moment I keep coming back to. A task I used to look at, calculate the time it would take, and decide wasn't worth doing. Not because it wouldn't add value, it would. But because three hours of manual work is just not worth it for now.

Now that task runs in the background while I'm on a call. The economics flipped completely. The value didn't change. The cost dropped to near zero.

Every business owner in the world has a version of that task. A thing they know would help but can't justify the time to do manually. Agentic AI doesn't just make existing tasks faster. It makes previously impossible tasks routine.

That's not an incremental demand increase for memory. That's a structural expansion of what AI is asked to do.

The memory math changes entirely when you realize the universe of tasks being handed to AI isn't fixed. It's growing every time an operator like me discovers something new that agents can do that I couldn't justify doing before.

I'm discovering new stuff for agentic AI to do for me every week. I freed up my time by creating the biggest demand surge in semiconductor history. You're welcome, Samsung.

I hold a long position in DRAM ETF (ticker: DRAM). Not investment advice. Do your own research.

My current positions as of May 14, 2026.