Not because I've changed my mind. But I’m a realistic investor and not just some cheerleader and any thesis worth holding is worth stress-testing publicly. And the one risk that keeps me up at night isn't China, isn't Samsung's strike, isn't a recession. It's the possibility that a software breakthrough makes the hardware bottleneck disappear faster than anyone expects.

Let me explain why this is one true risk I genuinely am afraid of.

The DeepSeek Warning Shot

In January 2025, a Chinese AI lab dropped a model that matched GPT-4 level performance at a fraction of the compute cost. Nvidia lost $600 billion in market cap in a single day — the largest single-day market cap wipeout in stock market history.

The underlying thesis AI needs more GPUs didn't change. Nvidia is still printing money. But the stock got cut 17% in one session because the market repriced the narrative before the fundamentals had time to respond.

That's the black swan pattern for memory. Not "the thesis is wrong." But "the narrative shifts faster than the fundamentals, and you get crushed on the way to being right."

The Technologies Actually Worth Watching

Most software optimization stories are overhyped. But a few specific developments deserve genuine attention:

State Space Models (Mamba architecture) Standard transformer attention has a fundamental problem: memory requirements scale quadratically with context length. Every token attends to every other token. As context windows get longer — and agentic AI requires very long context — memory demand explodes. Mamba and similar state space models process sequences without this quadratic scaling. It directly attacks the mechanism that makes long-context agentic AI so memory-hungry. Still early stage, not yet competitive with transformers at scale, but the academic momentum is real.

Mixture of Experts (MoE) Instead of activating the entire model for every inference, MoE only activates the relevant subset of parameters. GPT-4 reportedly uses this architecture. The memory implication: you can have a very large model with significantly lower active memory per inference. This is already deployed at scale and already compressing memory requirements in ways that 2023 demand forecasts didn't account for.

Model Quantization Running models at 4-bit precision instead of 16-bit reduces memory footprint by 4x with acceptable quality loss for many applications. This is mainstream now, not experimental. Every inference optimization company is deploying it. It's already happened and already partially explains why inference efficiency has improved faster than raw demand growth would suggest.

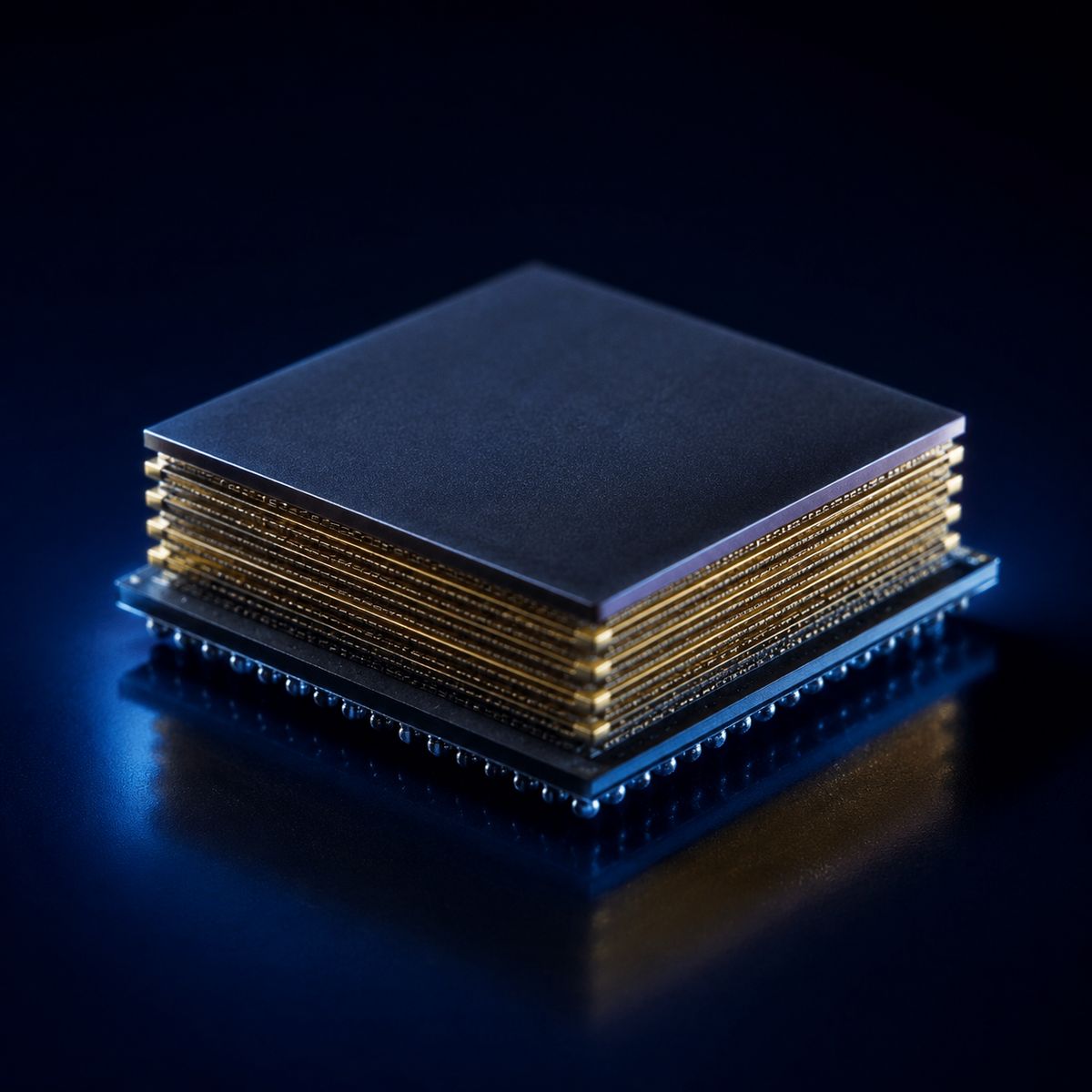

Processing-in-Memory (PIM) Here's the uncomfortable one: Samsung and SK Hynix are both developing chips that integrate processing logic directly inside the memory itself. The bandwidth bottleneck that makes HBM so valuable exists because data has to travel between memory and processor. PIM eliminates that travel. If it works at scale, the architectural advantage of HBM narrows significantly.

The irony: your thesis holdings are funding the technology most likely to disrupt their own product. They know this. It's why they're developing it themselves. They'd rather cannibalize their own margins than let a startup do it.

Why Jevons Probably Saves You. But Not Immediately

Every time computing got cheaper and more efficient, total consumption went up. This is Jevons Paradox and the historical record on this is unambiguous. More efficient AI will get deployed more broadly, run more agent sessions, and likely consume more total memory even if each session uses less.

I believe this. But here's the honest reality: Jevons works over years, not weeks. A dramatic efficiency breakthrough, something 10x more efficient, arriving faster than adoption can absorb it, creates a demand air pocket. The stock market prices that air pocket immediately. You could be right about the 5-year thesis and still suffer a 40% drawdown in the next 6 months if the narrative shifts. The market is not always rational.

That's not hypothetical. It's exactly what happened to Nvidia in 2022. The thesis was right. The drawdown was brutal. The investors who held through it had to have conviction that went beyond normal human tolerance for paper losses.

The Signal I'm Actually Watching

I'm not monitoring memory spot prices as my primary signal. By the time pricing data shows a demand disruption, it's too late to exit cleanly.

I'm watching two leading indicators instead:

VC deal flow into memory-efficient AI inference. When Sequoia or a16z writes a $200M+ check into a startup with a credible benchmark showing 5x+ memory efficiency at scale — that's the market pricing in disruption 12-18 months before it hits earnings. That check hasn't been written yet at that scale. When it is, I'll be trimming.

AI research paper momentum on non-transformer architectures. Specifically, whether state space models start matching or exceeding transformer performance on long-context tasks. Right now they don't. If that changes and it gets mainstream attention, the narrative shift follows quickly.

Neither signal is flashing right now. But I check both regularly.

What This Means For My Position

I'm not selling. The black swan scenario requires a specific combination of events: a breakthrough large enough to matter, arriving faster than adoption grows, sustained long enough to actually show up in HBM demand data. Each of those conditions is possible. All three simultaneously is low probability in my 1-3 year window.

But I hold this position knowing the risk exists. I've sized accordingly — meaningful enough to matter if I'm right, not so large that the black swan scenario ends me if I'm wrong. DRAM is a significant position for me, not a lottery ticket.

The most dangerous investor is the one who can only see the upside. I can see this going wrong. Here's how I'd know it's happening before it shows up in the price.

That's what risk management actually looks like from me.

I hold a long position in DRAM ETF (ticker: DRAM). Not investment advice. Do your own research.